Mnist_model.add(Dense(10, activation='softmax'))

Here is your revised code: class NeuralNet(nn.Module): The linear layer should therefore take 28*13*13=4732 input features.The max pooling layer will halve your spatial size, so that you’ll en up with.Therefore you’ll end up with 28 activation maps of spatial size 26x26. Since you are not using any padding and leave the stride and dilation as 1, a kernel size of 3 will crop 1 pixel in each spatial dimension. The 28 is given by the number of kernels your conv layer is using. After the first conv layer, your output will have the shape.Your first conv layer expects 28 input channels, which won’t work, so you should change it to 1.Īlso the Dense layers in Keras give you the number of output units.įor nn.Linear you would have to provide the number if in_features first, which can be calculated using your layers and input shape or just by printing out the shape of the activation in your forward method.

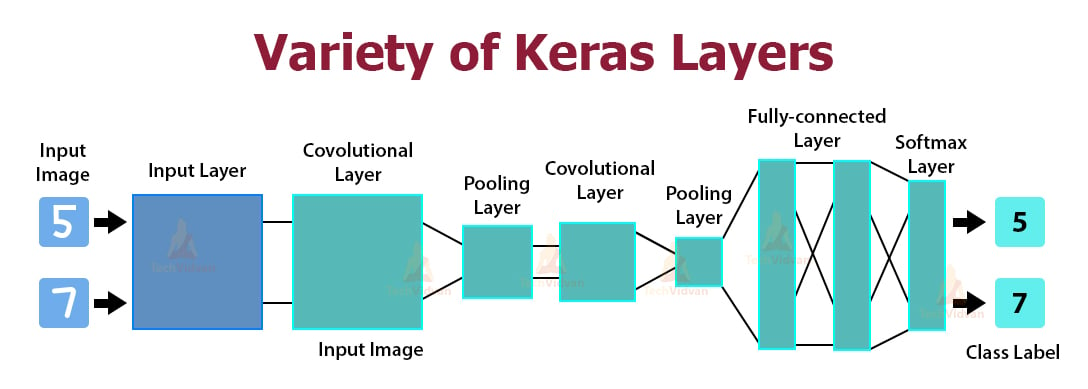

The in_channels in Pytorch’s nn.Conv2d correspond to the number of channels in your input.īased on the input shape, it looks like you have 1 channel and a spatial size of 28x28. X = self.hidden2(F.relu(self.hidden5(x))) X = self.hidden2(F.relu(self.hidden3(x))) Self.hidden3 = nn.Linear(128, 10) # equivalent to Dense in keras I couldn’t find out what should be output channel in Pytorch corespond with this keras mode. Model.add(Dense(10,activation=tf.nn.softmax))Īnd this is my Pytorch model, and I am not sure am I doing right or not as I am new in CNN and Pytorch. Model.add(Dense(128, activation=tf.nn.relu)) Model.add(Flatten()) # Flattening the 2D arrays for fully connected layers Model.add(MaxPooling2D(pool_size=(2, 2))) Model.add(Conv2D(28, kernel_size=(3,3), input_shape=input_shape)) Here is the original keras model: input_shape = (28, 28, 1) I’m trying to convert CNN model code from Keras to Pytorch.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed